The True Cost of DIY AI Agents: Why Managed Beats Homegrown

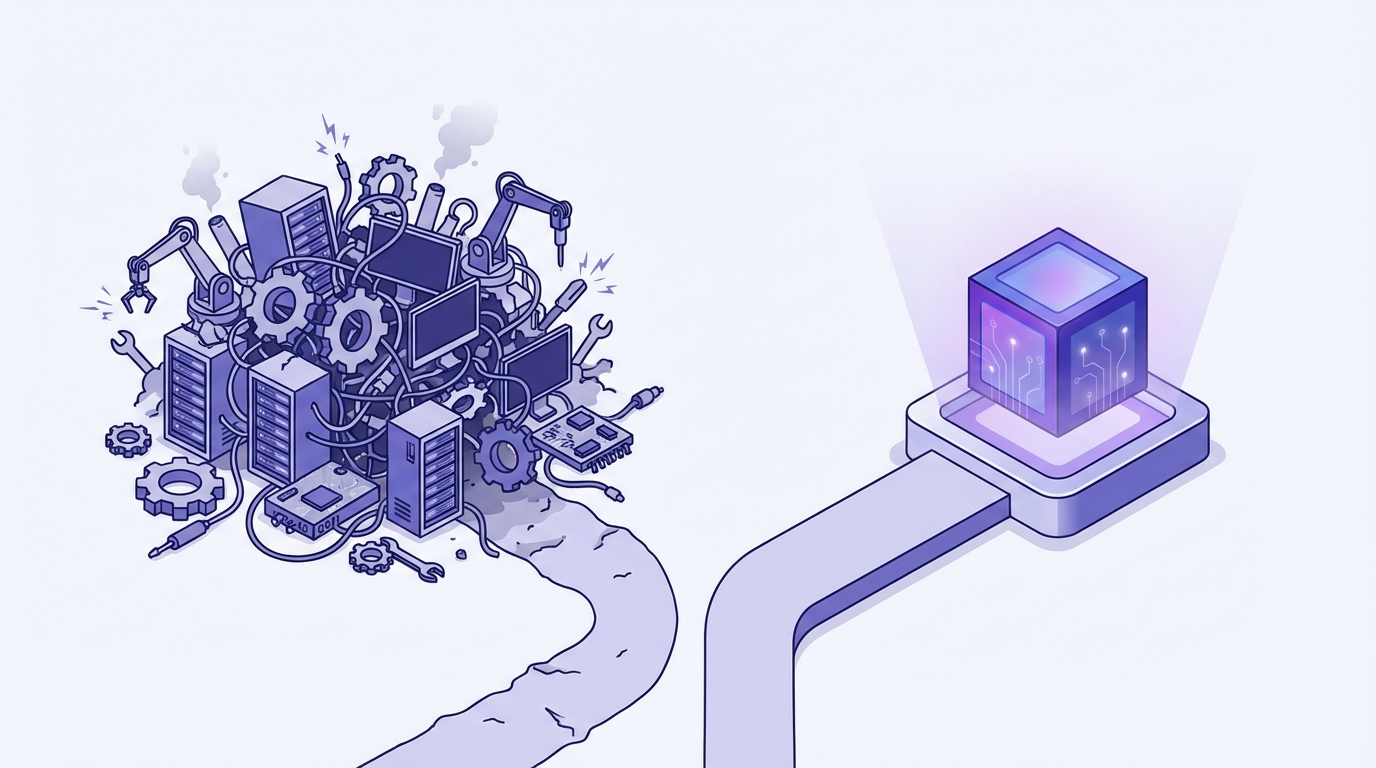

Building your own AI agents sounds appealing until you add up the real costs. Here's the honest math on build vs. buy for AI agent platforms.

Every engineering team that discovers the OpenAI API has the same thought: "We could build this ourselves." And they're right. You can build your own AI agents. You can also build your own database, your own email server, and your own CDN. The question isn't whether you can. It's whether you should.

I've watched dozens of teams go down the DIY AI agent path. The pattern is remarkably consistent: excitement in month one, complexity in month three, quiet abandonment by month six. Not because the engineers aren't talented. Because the problem is deeper than it looks.

The Iceberg Problem

Building an AI agent that works in a demo takes about a week. Building one that works in production takes about six months. The difference is everything below the surface.

What You See (Week 1)

import openai

client = openai.OpenAI()

response = client.chat.completions.create(

model="gpt-4",

messages=[

{"role": "system", "content": "You are a helpful research analyst."},

{"role": "user", "content": "Analyze our competitor landscape."}

]

)

print(response.choices[0].message.content)

That's maybe 10 lines of code. It works. The output looks impressive. Your CEO sees the demo and greenlights the project. Now the real work begins.

What You Don't See (Months 2-6)

Memory management. Your agent needs to remember things across sessions. Not just conversation history, but structured knowledge: facts, preferences, relationships, past decisions. You need a memory architecture: what to store, how to retrieve it, when to forget it, how to handle contradictions. This alone is a multi-month engineering project.

Tool integration. Your agent needs to interact with external systems. CRM, email, calendar, Slack, databases, APIs. Each integration needs authentication, error handling, rate limiting, retry logic, and schema management. When Salesforce changes their API, your agent breaks at 2 AM.

Reliability. LLMs are non-deterministic. The same prompt can produce different outputs. Your agent might work perfectly 95% of the time and fail catastrophically 5% of the time. Handling edge cases, implementing guardrails, building fallback logic, and creating monitoring systems is where most of the engineering time goes.

Evaluation. How do you know if your agent is actually good? You need evaluation frameworks, test suites, regression testing for prompt changes, and metrics that capture quality beyond "did it respond." This is an active research area. You're competing with teams of PhDs.

Orchestration. Once you have multiple agents or multi-step workflows, you need orchestration: task routing, handoffs, parallel execution, error recovery, and state management. This is distributed systems engineering, and it's hard.

Context window management. Models have finite context windows. Your agent needs strategies for what to include, what to summarize, and what to drop. Get this wrong and your agent either runs out of context mid-task or wastes tokens on irrelevant information.

Security and compliance. Your agent has access to company data and external systems. You need audit trails, access controls, data handling policies, and compliance documentation. For regulated industries, this alone can consume months.

The Real Cost Breakdown

Let's do the math honestly.

DIY AI Agent: Year One Costs

| Cost Category | Estimate | |---|---| | Senior ML/AI Engineer (1 FTE) | $180,000 - $250,000 | | Backend Engineer (0.5 FTE) | $80,000 - $120,000 | | DevOps/Infrastructure (0.25 FTE) | $40,000 - $60,000 | | LLM API costs (GPT-4/Claude) | $12,000 - $36,000 | | Infrastructure (compute, storage, vector DB) | $6,000 - $18,000 | | Opportunity cost of delayed features | Hard to quantify | | Total Year 1 | $318,000 - $484,000 |

And that's for one agent. Each additional agent type (research, content, ops) adds incremental cost.

Managed AI Operator: Year One Costs

| Cost Category | Estimate | |---|---| | Platform subscription (self-service) | $6,000 - $9,600 | | Platform subscription (fully managed) | $60,000 - $80,000 | | Integration/setup (one-time) | $0 - $15,000 | | Internal project management (0.1 FTE) | $15,000 - $25,000 | | Total Year 1 | $70,000 - $120,000 |

The managed approach costs roughly 25% of DIY. But the cost advantage isn't even the main point.

The Cost-Per-Hour Comparison

At OpFleet, a self-service operator runs at $0.68 per hour of always-on work. A fully managed operator, with white-glove setup, dedicated support, and custom integrations, comes to $6.85 per hour. Both are all-in: compute, memory, tool access, and infrastructure.

A human doing equivalent work costs $35-75/hour for junior staff, $75-150/hour for senior staff, more for specialized roles. The AI operator doesn't take PTO, doesn't need benefits, and works at 3 AM without overtime.

But here's the calculation people miss: the DIY approach doesn't save you that $0.68-$6.85/hour. You're paying engineer salaries to build what the platform already provides. Your "free" homegrown agent actually costs more per hour of productive work than the managed alternative because you're amortizing hundreds of thousands in development costs over a smaller number of productive hours.

The Five Traps of DIY

Trap 1: The "We're Different" Fallacy

"Our use case is unique, so we need a custom solution." This is almost never true. Your business is unique. Your AI agent infrastructure is not. You need memory, tool integration, orchestration, and reliability, just like everyone else. The custom part is configuration, not architecture.

Trap 2: The Prototype Plateau

Your prototype works great on happy paths. Then you encounter the long tail of edge cases. The agent misinterprets an ambiguous request. A tool call fails silently. The context window fills up mid-task. Each edge case takes disproportionate effort to handle. Teams get stuck in an endless cycle of "almost production-ready."

Trap 3: The Maintenance Multiplier

LLM providers ship breaking changes. New models have different behaviors. Prompt engineering that worked with one model generation breaks with the next. Vector database performance degrades as data grows. Each component of your stack requires ongoing maintenance, and the compounding effect is brutal.

Trap 4: The Talent Bottleneck

The engineers who can build production AI agent systems are expensive and scarce. When your AI engineer leaves (and in this market, they will), their institutional knowledge about your custom agent architecture leaves with them. The remaining team inherits a complex system they didn't build and don't fully understand.

Trap 5: The Innovation Gap

While your team is building basic infrastructure, managed platforms are shipping features you haven't even considered yet. Adaptive memory systems. Multi-agent collaboration. Sophisticated evaluation frameworks. Progressive autonomy. You're building a bicycle while the market is shipping motorcycles.

When DIY Actually Makes Sense

I'm not saying DIY is never the right choice. It makes sense when:

- AI agents are your core product. If you're selling AI agents, you should probably build the infrastructure. If you're using them internally, buy.

- You have extreme regulatory requirements. Some industries (defense, certain healthcare applications) genuinely can't use third-party platforms. But this is rarer than people think.

- You need a very narrow, simple agent. If your use case is a single-purpose bot that does one thing, a managed platform might be overkill. But these cases rarely stay simple.

- You have a large, dedicated AI platform team. If you already have 5+ engineers dedicated to AI infrastructure, the incremental cost of building agents on your own platform is lower.

For everyone else, the math points to managed.

The Build vs. Buy Decision Framework

When evaluating whether to build or buy, score yourself on these dimensions:

1. Core competency alignment (1-5) Is building AI agent infrastructure core to your business? If you're a SaaS company that wants AI operators for internal workflows, the answer is 1. If you're an AI platform company, it's 5.

2. Engineering capacity (1-5) Do you have spare senior engineering capacity? Not "could we hire someone," but "do we have someone available now who has built production AI systems before?"

3. Time sensitivity (1-5) How quickly do you need results? DIY takes 6+ months to production. Managed platforms can deploy operators in weeks. If you need results this quarter, buy.

4. Scale requirements (1-5) How many different agent types do you need? One simple agent might justify DIY. Five specialized operators definitely justifies managed.

5. Maintenance commitment (1-5) Are you prepared to maintain this indefinitely? AI infrastructure isn't "build once and forget." It requires ongoing investment as models, APIs, and best practices evolve.

If your total score is under 15, buy. If it's over 20, consider building. In between, lean toward buying and reassess in a year.

The Pragmatic Path

The smartest approach I've seen is what I call "buy then build." Start with a managed platform to get operators running quickly. Learn what works, what your actual requirements are, and where you need customization. Then, if and only if the managed platform can't meet a specific need, build that specific component.

This approach lets you capture value immediately while making informed build decisions based on real usage data rather than assumptions.

Most companies that start with "build first" never finish. Most companies that start with "buy first" are running AI operators within weeks and iterating on what matters: the business logic, not the infrastructure.

The infrastructure is the boring part. The business logic is where the magic happens. Spend your engineering talent accordingly.